More notes on lab data storage

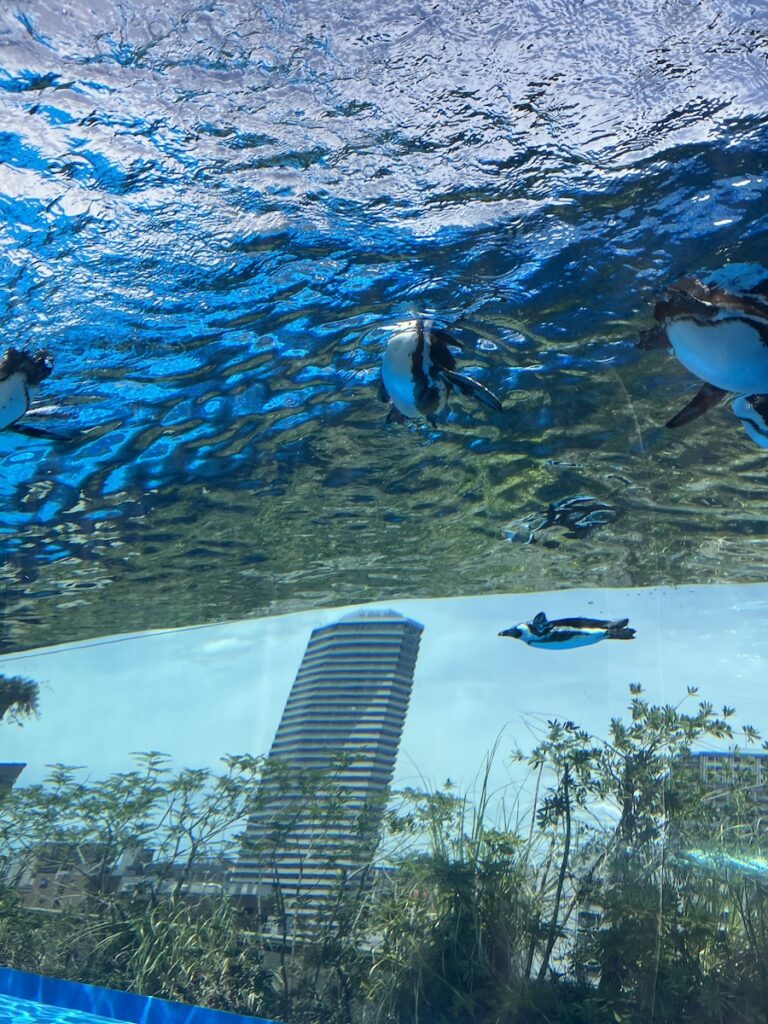

I was walking around an aquarium a couple of days ago and I saw a young person taking long videos of the fish in the tanks. Through the glass, glare and all, in a crowd chatting around the tank. Maybe they’ll watch that long video some time, or maybe they never will. Kids have to learn what to take pictures of. Some photos and videos have more value than others. Some pictures will get shared, and enjoyed by family members. Some pictures and videos will be viewed years later with nostalgia. Others just aren’t very interesting and are not worth archiving.

Which reminds me of lab data storage. We redid our scheme recently. We had been doing full versioned backups of our server, but with large datasets, that quickly becomes unwieldy. Relatively small changes or preprocessing ends up getting stored multiple times. I was just throwing more drives at the problem for a while, but now we have a more efficient solution.

We still have a fully versioned back up of the main server, so we can step backwards if there are accidental deletions or corruptions happening. But we try to keep the data on it to a minimum. Just stuff for papers we’re working on right now. Data that has either been published or is otherwise not under active analysis, is put onto a simple mirrored drive setup. That way we can tolerate a drive failure, and we aren’t taking up extra space with versioned backups.

In general, I group my data sets into four classes.

1. Live analysis data: that goes in the cloud (also on the lab server).

2. Recent data: that goes on the lab server with versioned back-up

3. Older data: that goes in a semi-cold storage– on the server, but with a non-versioned back-up.

4. Really old data: that is deleted. More on that below.

Back in 2017, I wrote about my thoughts on cloud vs. on campus server storage — and that’s still basically what I think. I use the cloud for documents, figures, etc., but I only put raw data in the cloud if I’m working on it right now. That way I can keep working at home, lab, or while traveling with little friction. I use a server on campus for most of my data storage. That’s cost-effective, especially if the campus IT understands exactly what you want and can price it accordingly.

Tape backups: Bang-for-your-back, LTO tape back up is a good way to go. Make sure that software, storage, testing/verification, personnel, etc. all work well. As cheap as tape is, I don’t use it. Here’s why…

If it’s not worth keeping live, then it might not be worth keeping. It is natural to not want to part with data. But honestly, after the project is published, and a few years pass, the likelihood of ever using the original raw data dwindles.

There are exceptions. We have data sets that we’ve shared many years after we were done with them. However, even in those cases, the data set that is shared is typically the spike times and other experimental metadata. The actual raw data isn’t typically redistributed. Or even accessed. It can be– I’m not arguing against it– I’m just stating that in my experience, most scientists interested in analyzing existing data sets don’t even want the raw data. They aren’t familiar with the nuances of the data processing (spike sorting, segmentation, etc.) and it is more efficient to share a more processed version, rather than raw data.

Some scientists probably back up more data than they need to. They are data hoarders. There may be little harm in that, and it’s good to at least err on the side of over-preservation. In some cases, though, it can become inhibitory for more productive activities. For example, if it becomes expensive relative to other lab expenditures. I know of one case where a lab was actually using quite a bit of physical space to store old raw data that probably no one was ever going to try to access again. I’m probably the opposite of a data hoarder. I like to just get rid of it. I don’t want the clutter. And I’m partly this way because I have had so many cold backups fail on me.

There are many failure modes for offline backups. Any media — tapes, hard drives, or solid state memory like flash drives — can accumulate flipped bits over time, corrupting data. Even a small corruption can render a huge file unreadable. I’ve lost data due to corruption on tape, hard drive, optical CDs, and flash memory drives. I’ve also had successful backups work on all of these media. Typically, I find corruption to be worse for media that is not live. E.g., HDs left in a powered down state, tapes or flash memory. And so I just don’t use real cold storage anymore. If I don’t want to spend the money to keep it on the server and/or in the cloud, then maybe I don’t need it that badly.

And if I’m wrong, and I’ve deleted old data that I wish I could recover, then maybe I should just redo the study! Because I do experiments. I don’t make rare, once-in-a-lifetime astronomical observations. I measure things that should be easy to replicate, probably even better than before.