Autonomous vehicles vs. mice vs. bouncy balls

I have a little piece I often reuse, it goes something like this.

We know that visually guided navigation is a hard problem even for state-of-the-art AI because we’re pouring billions of dollars into R&D for self-driving cars and they still fail in situations where novice drivers don’t. Waymo is pretty good, but they have tons of cameras, lidar, and radar units. Tesla is going vision-only, and nearly always has to be supervised, despite an array of high resolution cameras and kilowatts of on-board processing power. And regardless, in all cases this is just for highly stereotyped roads and places that are designed for vehicles.

By contrast, running on less than 1 watt of power, and in a fraction of a second, a mouse can navigate to a place in this room where it can get to and I can’t. It won’t make any mistakes. It won’t run into a wall. It won’t go in pointless loops. Despite how unnatural, how out-of-distribution it is, despite how poor the resolution of mouse vision is, they will recognize their affordances and navigate to a place where they can get to and I can’t.

I think this is a pretty compact way of describing a visual navigation problem and highlighting how evolution has led to animals that are wonderful at generating robust behavior to solve the problem. Yet humankind still has not.

I use this piece a lot. Too often, to be honest, but it’s useful to me.

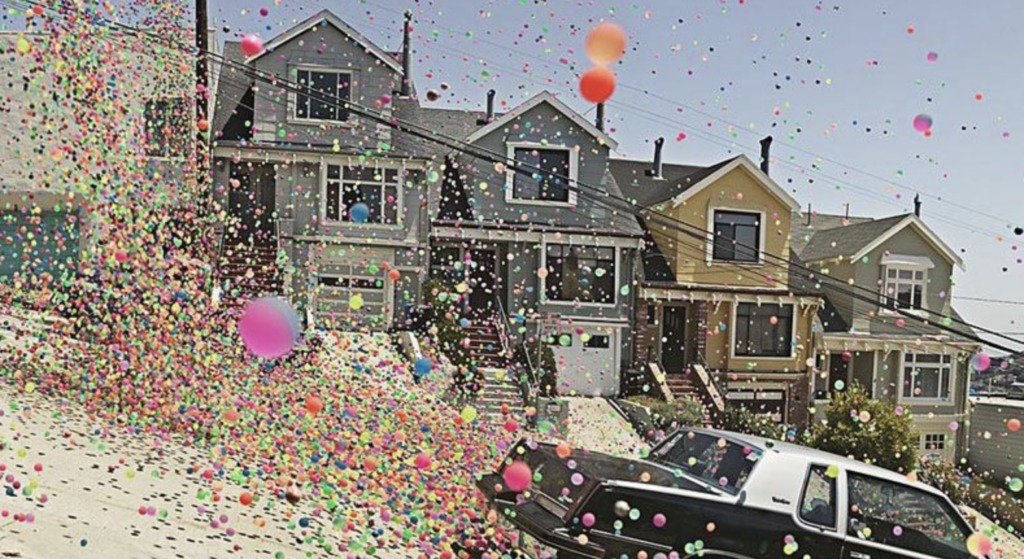

Then, one day, my wife Prof. Ikuko Smith, DVM PhD, said, “Our daughter can throw a small bouncy ball in this room and often get the same result. It will end up under a couch or table or someplace it can get to and you can’t.”

Goddammit. That’s an excellent evisceration of my thesis. How did I ever convince a person like her to pair up with me?

Sure, the bouncy ball is inanimate, and it will often come to rest in an easy-to-access location. But I love the point. The algorithm needn’t be very complex.

It reminds me of when my father and I were watching sanderlings on the beach in Santa Barbara (not my video, but if you’re curious). I had just started graduate school at UCLA and he flew out to hang out with me for a bit. I didn’t know what to do, as I had just moved to California myself, but I remembered that he had visited Santa Barbara when I was a kid, so we drove up there. And now I live here.

I said that I was impressed at how precise and reliable the birds were at navigating the waves. People would get drenched by waves occasionally, but the birds seemed to know right when to retreat, and when to venture far down the shore. My dad listened patiently, and then said something like, “It’s maybe not that hard of a calculation, they’re just used to it. They know what it should look like, and they have lots of practice.” It was a down-to-earth, common sense take. Maybe it’s hard to me, and I’d knock myself out developing an algorithm to reproduce the behavior, but that doesn’t mean it’s actually hard. Just like humankind learned with visual object/character identification, through Imagenet/Alexnet. It’s not actually that hard, you just need a more organic system (e.g., a CNN). Tons of training data + backprop can help you design the system, but however you get to the solution, the end implementation (ie, inference time) is not that hard.

I hope to hit upon a similar insight with the mouse vs. AI stuff we’re doing. Generating robust behavior in complex settings is maybe not that hard, but until you have the recipe, it seems hard. Let’s figure out how to make the system.