Rigor for everyone

It’s right there in the name of this blog. A double entendre: (1) for experimental apparatus rigs and the people who build them, aka “riggers”; and (2) rigor, an important principle, both generally and specifically in scientific research. I guess it’s a homophone then (rigor/rigger)? Or both a homophone and a double entendre? At any rate, it’s a paronomasia.

In scientific research, I am interested in how we can avoid fooling ourselves. Scientists are, by training and nature, appreciative of evidence, quantified uncertainty, and updating models or beliefs. Still, we aren’t perfect, and there’s room for improvement. For example, how can we avoid letting entire fields wander off into areas that lack a firm, rigorous foundation?

More generally, in everyday affairs, policy and politics, there is also room to enhance our rigor. This is a somewhat thornier thing to me. How do we equip ourselves and our neighbors to not fool themselves? What are the antidotes to charlatans, conspiracy theories, and other nonsense?

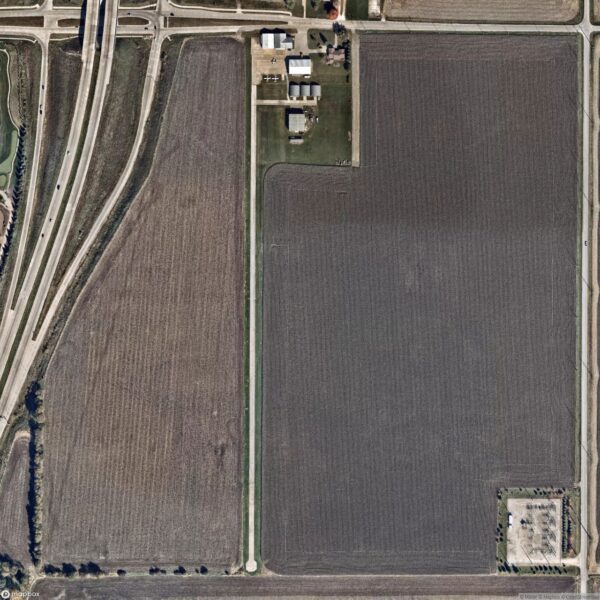

I’m haunted by the discussions I had with my father. He passed away years ago, but today would have been his 78th birthday. (The photo here is of the private airfield he liked to fly out of. He flew single engine planes as a hobby.) He was fascinated by science and engineering, was an excellent programmer and rigorous thinker in many ways, but he was still vulnerable to charlatans, conspiracy theories, and other nonsense. I assume that I am not invincible either, which is part of the point I’m making. Humans may vary in our susceptibilities, but I doubt that very many of us are truly immune.

I talked to my father about how scientists value evidence and precise discussions of uncertainty. He was receptive, and appreciated the point, but still, he loved searching for evidence and arguments that upheld his beliefs rather than challenging them. Many people do.

Ultimately, raw evidence isn’t enough. The advance of human science and technology is a social enterprise, as one must get eyeballs on their work and evidence, and then persuade the viewers that their evidence is enough for the audience to update their models or beliefs. 1. Evidence. 2. Attention. 3. Persuasion. People can skip over the first step, and simply garner a big audience and persuade them with a lot of hot air, filling in the evidence later– often with a lot of the window dressing of scientific rigor, which only specialists in the field would see through. This leads us to situations where conspiracy theories take hold, and disagreements on facts, history, and current events drive people apart, with each feeling righteous, and evidence-based.

Do you have thoughts one how we address this? Accountability is one way. What are some other practical strategies?